How to Evaluate AI Fluency in Technical Interviews

Why banning AI is outdated and how to redesign your process without lowering the bar.

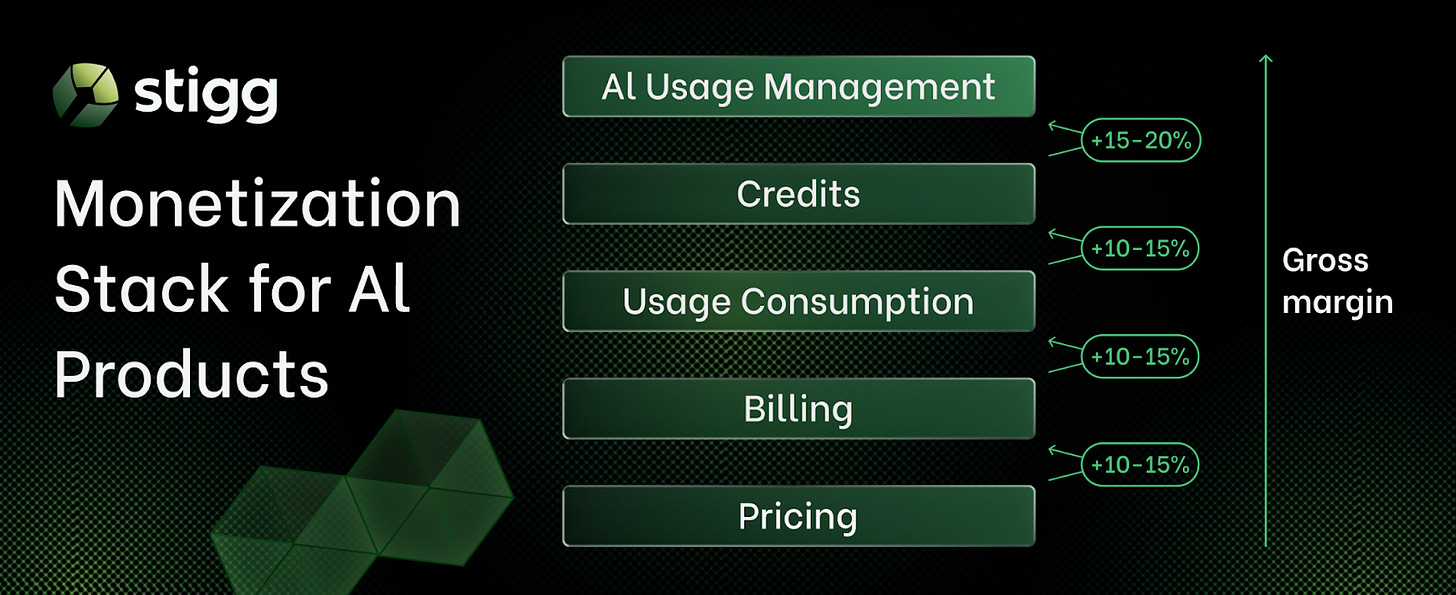

This week’s newsletter is sponsored by Stigg.

Most engineering teams discover the gap between billing and usage enforcement the hard way. AI features ship. Adoption grows. Then one day an enterprise customer asks why a request got blocked while an identical one went through, and nobody has a clean answer.

The problem isn’t billing. Billing is working exactly as designed. The problem is that billing records what happened after execution. It was never built to decide what’s allowed while the system is running. And it’s just one layer in a monetization stack that most teams only realize is incomplete once AI hits production.

Shai Betito, VP of Engineering at Stigg, breaks down why this distinction matters more than most teams realize until it’s already painful:

Why limits stop being simple counters under concurrency and become a consistency problem at scale.

How a single request can touch multiple organizational dimensions simultaneously, and why resolving that correctly in milliseconds is a fundamentally different challenge than monthly reconciliation.

Why identity-based access control breaks under AI workloads. Automated pipelines don’t have seats, and the control model has to change accordingly.

A technical breakdown of a class of infrastructure problems that appears after AI reaches production, written by engineers who’ve had to solve it.

Find out what's missing from your AI product's infrastructure:

Thanks to Stigg for sponsoring this newsletter. Let’s get back to this week’s thought!

Intro

Based on recent reports, we can see that AI adoption in companies is increasing. As AI becomes more common in the workplace, the hiring process must reflect its presence.

From my conversations with engineering leaders from companies like OpenAI, Meta, and others, I’ve learned that there is an increased expectation in AI fluency, especially for engineering and product roles.

Companies are starting to understand that AI is becoming an important part of the day-to-day work that tech professionals are doing.

The question we want to answer today is how can we actually check for AI fluency in technical interviews? To help us with this, Hamid Moosavian, director of engineering at Xe, our guest author, will share practical insights on how to evaluate candidates.

Let’s introduce our guest author and get started.

Introducing Hamid Moosavian

Hamid Moosavian writes First 90 Days for Engineering Managers, a biweekly newsletter of practical management tips, and is the creator of the free First 90 Days Kit, a collection of copy-ready templates for new engineering managers. Hamid is also director of software engineering, Americas, for Xe.

Your engineers are already using AI in their work. Your engineering candidates probably are, too. But how do you know whether candidates are using AI well if you’re not interviewing for it? Hamid walks us through the importance of including AI in your interviews and how to do it.

Over to you, Hamid!

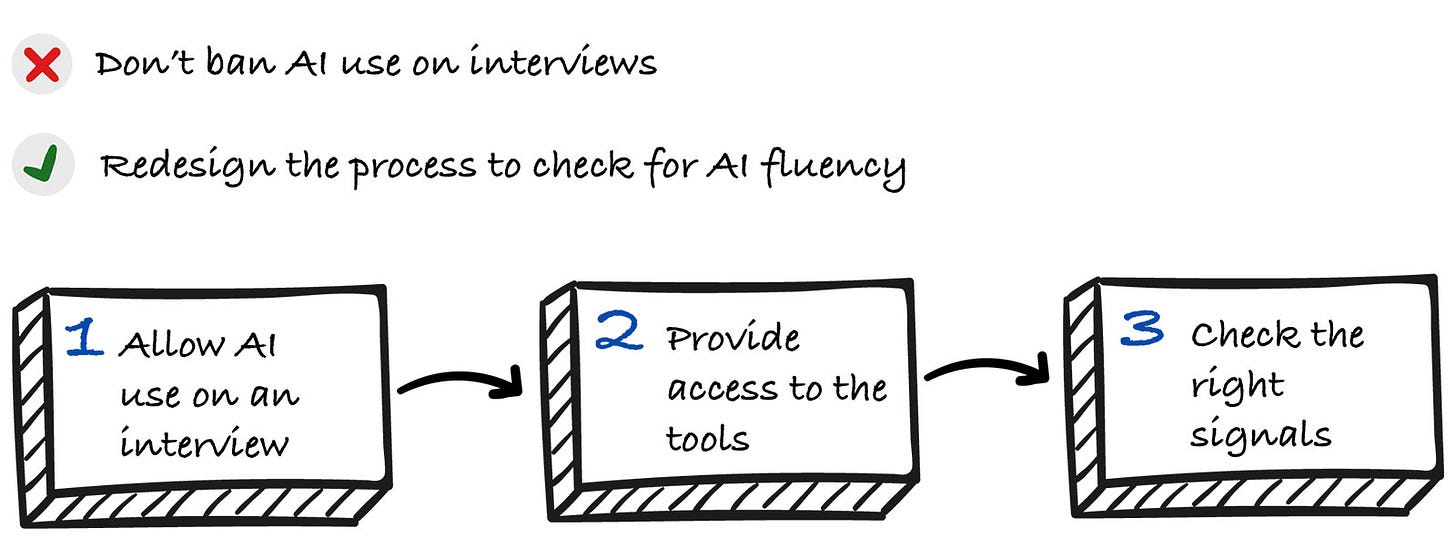

Your Tech Interview Process Shouldn’t Be AI Resistant

When Copilot and similar AI pair-programmers emerged, most teams made their hiring processes AI resistant. The logic was simple: If candidates used AI during assessments, their real skills couldn’t be evaluated.

That approach is outdated.

By the end of 2025, over half of professional developers were using AI daily. Some surveys put the number at 97% when including those planning to adopt it. AI assistants have become standard tools for productivity.

At the same time, senior engineers and tech leads started sharing examples of poorly reviewed AI code. The pattern is clear: Some developers use AI as a thinking partner and ship better code, while others blindly accept suggestions and generate unmaintainable systems.

The tool isn’t the problem. How people use it is.

The comparison to Google and Stack Overflow is obvious. No one expects developers to avoid documentation or search engines. But we do expect them to use these resources well.

This situation changes what good hiring looks like. The goal is no longer to prevent AI use. It is to evaluate how candidates use AI and whether they produce clear, maintainable code even when the tool misleads them.

Companies like Meta and Canva have already made this shift. More are following.

Here’s what to understand about AI fluency and how to update your process.

What AI Fluency Actually Means

Let’s first be more specific about AI fluency. I suggest the following framework, though you can adjust categories to fit your context.

Level 0 AI Avoidant: Is skeptical of AI tools and refuses to use them. Relies on memorization or traditional resources. Sees AI as cheating and won’t trust AI-generated code. Struggles with productivity when peers use AI assistance.

Level 1 AI Dependent: Uses AI-generated code without understanding or verification. Accepts the first suggestion, can’t explain what the code does, and gets stuck when AI gives wrong answers. Can’t debug AI output.

Level 2 AI Competent: Uses AI for boilerplate, refactoring, unit tests, and repetitive tasks but reviews output critically. Catches obvious errors, tests generated code, and explains what AI suggested and why they accepted or rejected it.

Level 3 AI Advanced: Knows when not to use AI. Combines AI with deep technical knowledge to explore edge cases and verify assumptions. Treats AI as a thought partner, not a decision-maker. Works effectively with or without AI.

What do these levels look like in practice? Let’s break down the patterns that separate competent and advanced users from the rest.

Level 2 and 3 engineers share these habits:

Context-rich prompting: Provide relevant code snippets, file paths, and project constraints rather than vague or broad requests.

Incremental integration: Generate code in small chunks, test after each step, and commit frequently.

Critical review: Read all generated code line by line, check for edge cases and architectural fit, and validate against project standards.

Security awareness: Verify package names (up to 20% of AI suggestions reference nonexistent packages), check for hardcoded secrets, and validate input sanitization.

Appropriate skepticism: Treat AI as a pair programmer, not autopilot. Can proceed when AI is wrong or unavailable.

AI fluency shows up differently across engineering tasks. In debugging, it means using AI to generate test cases or explain error messages. In code review, it means catching issues you might miss manually. In architecture discussions, it means exploring design patterns without letting AI make final decisions.

Here’s what this looks like in practice:

A Level 1 engineer might prompt like this:

Create a POST endpoint for user registration.A Level 2 or 3 engineer provides context and constraints:

Using Express.js with TypeScript, generate the boilerplate for a POST endpoint at /api/users that:

- Accepts JSON payload with email and name

- Includes Joi validation

- Uses async/await error handling

- Returns 201 on success, 400 on validation errors

I’ll add the business logic afterward.You want engineers who can code without AI but also use AI fluently to improve their productivity.

Remember, though, AI is not a magic wand. It’s just a very powerful tool. Think of it like a power saw: It can cut through work 10 times faster than a hand saw, but without proper technique and safety measures, you can lose a finger. You still need to know carpentry; the tool just amplifies the skill.

Why Companies Are Making This Shift (and Why You Should Consider It Too)

The traditional approach to technical interviews is changing. Companies are realizing that if AI tools are standard in daily work, they should be part of the hiring process too.

Why? Because you want to see how candidates actually work, not how they perform under artificial constraints. The closer your test mirrors reality, the more reliable the results. Interviews should predict job performance, and job performance now includes AI.

Who’s already making the shift

Companies like Meta, Canva, and Intuit are testing a new approach. They’re not just allowing candidates to use AI tools like Copilot but observing how they use them.

Goldman Sachs has given its engineers access to GitHub Copilot and Gemini Code Assist and is even holding competitions to promote creative AI use among developers.

At Meta, candidates work with an IDE and AI chat window for writing code, debugging, and creating unit tests. Evaluation focuses on how effectively candidates use AI as a tool, integrating it into their process for daily engineering work.

The evaluation format is moving away from pure data structures and algorithms (DSAs) toward project-based tasks that mimic real engineering challenges. Interviewers assess problem-solving, code quality, verification, and communication, specifically how candidates prompt, debug, and justify AI suggestions.

Candidates must demonstrate control. Doing so shows that they’re the primary engineer, not a passive AI user. They should be catching subtle errors in AI-generated code and iterating on solutions. They should be articulating their prompts, reasoning, and trade-offs. They should be asking targeted questions to get efficient, accurate results.

Many Fortune 500 companies still share Amazon’s stance against AI interviews. But a growing number are embracing this shift, wanting to hire engineers who leverage new tools to enhance their skills.

Addressing the skeptics

Companies that don’t allow AI in technical assessments have a valid concern. They argue that allowing AI means everyone gets the same AI-generated solution, candidates don’t show their true skills, and AI covers for their weaknesses. Allowing AI does require redesigning the technical evaluation. Without it, you won’t collect meaningful signals.

However, that’s exactly what you want to test: how candidates differentiate when everyone has access to the same tools. Judgment becomes more critical than ever.

The evaluation shifts from “Does it work?” to “How was it built?” Discussions focus on key decisions, major choices, and code structure. Qualities like thoroughness become crucial: writing detailed prompts, refining them when needed, reading responses attentively, and reviewing proposed solutions carefully.

These habits separate engineers who think about tech debt and maintainability from those who just want working code.

Why This Matters for Your Company

Properly evaluating AI fluency leads to better hires in several ways.

Cost implications: When you evaluate candidates using the tools they’ll use on the job, you get a clearer signal faster. Instead of watching them struggle with boilerplate syntax, you see how they think about architecture, edge cases, and trade-offs, things that actually matter in the role.

Quality of hire: Here’s an example drawn from life. Let’s say you hire an intermediate front-end developer who writes good CSS and understands the front end well enough for the level. You give them Copilot access. Suddenly they move fast but create unmaintainable code. They’re using Copilot for the first time or using it irresponsibly. Your hire failed because you didn’t test what they’d actually do on the job.

Karat recently argued that interviews should mirror the job. Its blog states that preventing AI use hampers hiring engineers who can adapt to new tech. Its data suggests that watching how candidates interact with a large language model provides a stronger signal of seniority than traditional whiteboarding. If you’re not collecting this signal, you’re missing valuable input.

Competitive risk: Your competitors are already evaluating candidates’ AI fluency, and you’re losing AI-fluent candidates to them. You don’t need to pivot immediately, but start making your assessment more comprehensive.

Future-proofing: The skill gap is widening. For most product engineering roles, candidates who aren’t AI fluent today will struggle within six months. AI fluency signals that candidates are good learners who stay current and adapt to new tools. This is a strong indicator of future development potential.

These benefits stem from one principle: When interviews mirror how engineers actually work, you get a clearer signal and fewer post-hire surprises. Test the real workflow, and you’ll hire for the real job.

We’re at the beginning of this shift. My projection is that any company hiring product engineers at scale will eventually include an AI-fluency component, whether hands-on testing or interview questions. Since AI is becoming integral to developers’ work, it must be addressed during hiring. Otherwise, you risk unpleasant surprises.