How OpenAI's Codex Team Works and Leverages AI

Insights on how OpenAI's Codex team works, leverages AI, their team structure, development philosophy, and more. Based on my conversation with the Head of Codex.

This week’s newsletter is sponsored by Redis.

AI Isn’t Slowing Down. But the Constraint Is Shifting.

Redis has forecasted that the next wave of AI failures won’t come from weak models, but from poor context engineering. Getting context engineering right will be a make-or-break for companies building AI apps: reducing latency, controlling cost, and enabling scale.

Their 2026 predictions break down what engineering leaders must prepare for:

Agents won’t struggle with reasoning. They’ll struggle with finding the right data.

Agent frameworks that stay open, extensible, interoperable, unfussy, will be the ones that stick.

Everyone will become a coder, and the volume of apps will spike.

Before latency and cost become the constraint, read the 2026 predictions.

Thanks to Redis for sponsoring this newsletter. Let’s get back to this week’s thought!

Intro

AI is no longer just a tool engineers use, but it’s also heavily influencing how engineering teams are structured, how decisions are made, and how software gets built.

I’ve seen this especially in startups and mid-size companies trying to adjust the engineering org structures by creating AI-native engineering teams, but in my opinion, few teams have truly embraced it like OpenAI’s Codex team.

Behind products like the Codex app, IDE extensions, and the open-source coding agent is a relatively small group of around 40 people operating with an unusually high level of autonomy, speed, and trust.

Their work spans from low-level systems engineering, large-scale distributed infrastructure, product design, research, and user-facing experiences, and in all of the work, they heavily utilize AI.

What makes the Codex team especially interesting isn’t just what they build, but how they work. They’ve embraced AI as a core layer of their workflow: from planning and onboarding to code review, testing, and prioritization.

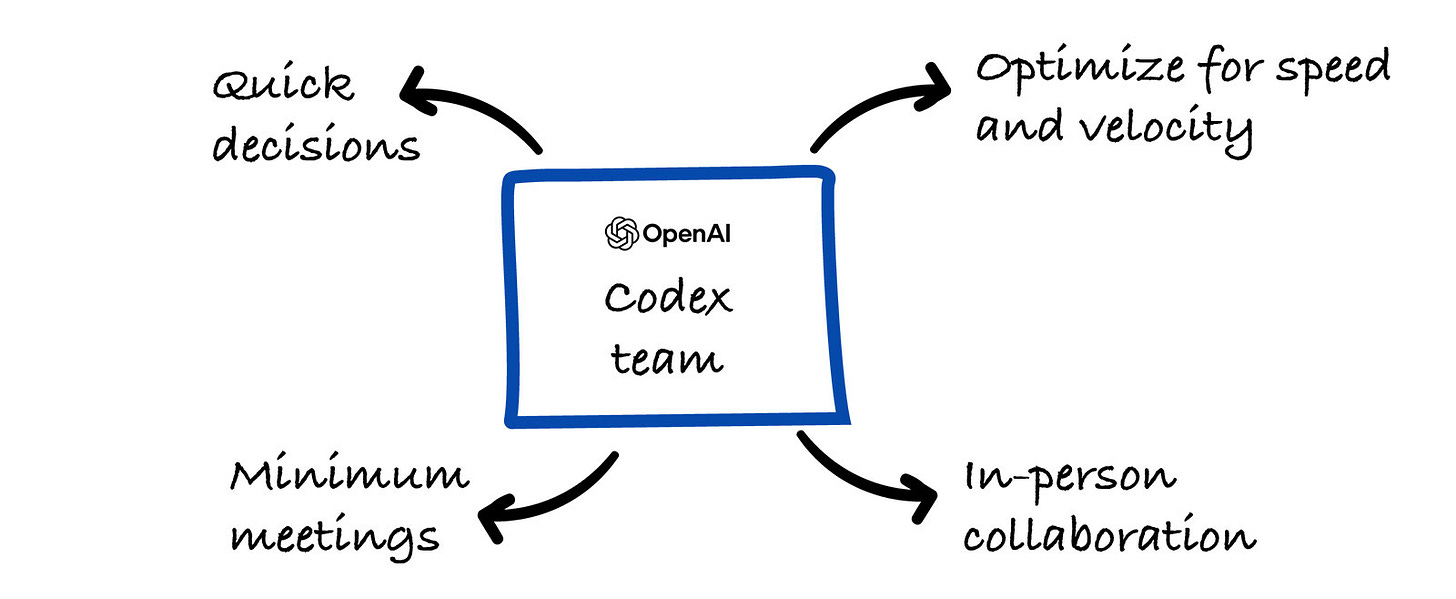

It’s a team with minimal hierarchy, very few meetings, and a single product manager to move at a pace that would otherwise seem unrealistic.

I recently had the pleasure of speaking with Thibault Sottiaux, Head of Codex.

And this is the second part of the 2-part article. Make sure to also read the first part to learn about what exactly AI-native teams are and how to build them.

And in this article, we’ll go through how OpenAI’s Codex team works and leverages AI → their team structure, development philosophy, use of Codex internally, and the lessons other engineering teams can apply as we move toward an AI-native future.

Let’s start!

The Codex team is around 40 people

And inside the team, there are many smaller teams that work on different projects, some including: Open-source coding agent, Codex IDE extensions, Codex app, and others.

They run with a strong emphasis on empowerment and local decision-making. It’s closer to a modern version of Bell Labs.

Individuals are trusted to make decisions because the pace of change demands it.

The Codex team has 1 Product Manager for the whole team, 2 designers, and others on the team are engineers with various expertise.

Here is an example that Thibault has mentioned of how effective their PM is.

How their PM works very effectively

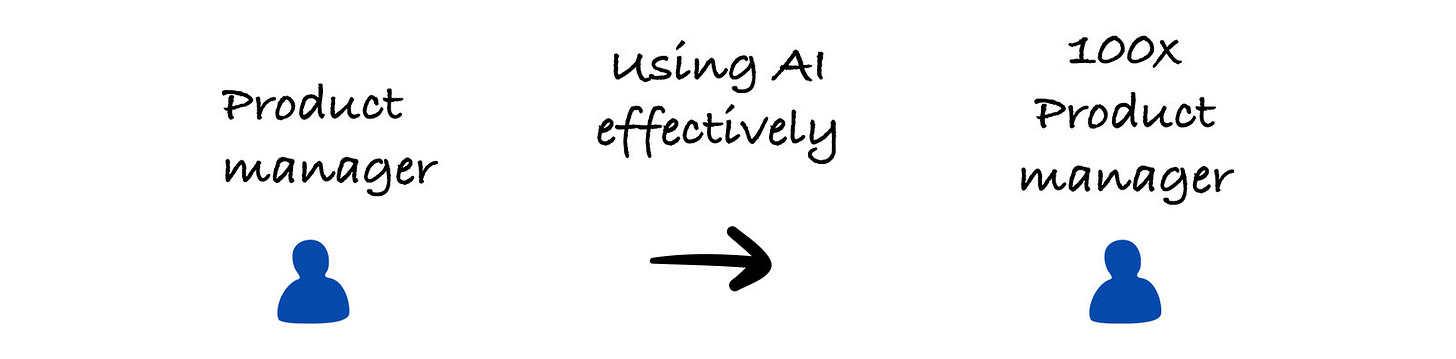

The Codex team is effectively run by a single product manager. The PM has scaled himself into something like a 100× PM by using Codex. Watching him work is unreal, it’s on another level.

He uses Codex to dig through user feedback, triage issues, and prioritize work in real time. During a one-hour bug bash session recently, the whole team was testing the app and logging issues. As the issues came in, the PM was instantly categorizing them, setting priorities, and assigning them to owners.

They went through over 100 issues in that single hour, and most were fixed within 24 hours. That kind of speed and coordination came from the PM’s planning and decision-making, but it simply wouldn’t be possible without AI.

The work they do and the profiles of engineers working on the team

A lot of the work is low-level systems engineering, mostly with Rust.

The work is done on the Codex harness, which is an internal software framework and runtime logic that powers the Codex AI coding agent, essentially the part of the system that orchestrates how Codex behaves, interacts with users, and runs tasks consistently across different interfaces.

And then there’s work on the open-source repo, Codex CLI coding agent, a tool you can run locally to help with coding tasks directly from your terminal.

On top of that, there’s backend infrastructure that connects the GPUs to the systems that run the models.

They also work on the Responses API, which is the core API interface for generating model outputs which is also how Codex talks to the models to do things like read files, run commands, or analyze code.

On top of that, they’ve built their own backend that handles the authentication, usage tracking, and sending requests and responses back and forth, so everything works reliably at scale. It’s a large distributed systems effort, and the team maintaining it is on on-call duty.

Then there are frontend and full-stack engineers building all their user-facing experiences where people actually use Codex.

They also have research profiles on the team, who work on various research to ensure the best OpenAI models are created.

Development process

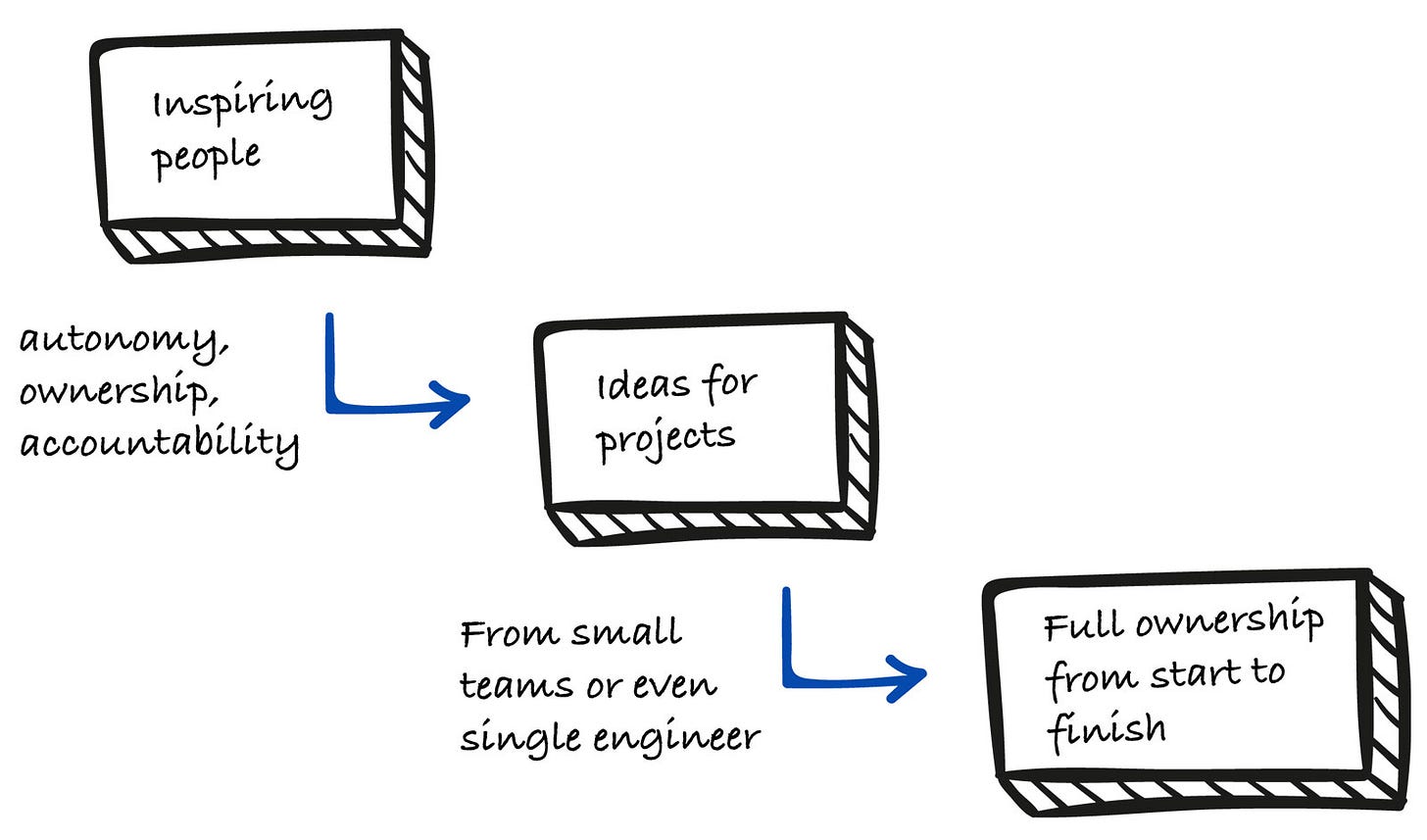

They start with an overall strategy, and from there, the focus is on inspiring people and giving them the autonomy and ownership to do their best work, while still holding them accountable.

The most successful projects usually come from very small teams, often just two or three people. Sometimes an entire feature is built by a single engineer who owns everything end-to-end:

planning, implementation,

launch, positioning, communication,

and then iterating based on user feedback.

That kind of full ownership is strongly encouraged on the Codex team. Most ideas also come bottom-up, driven by people on the team getting excited about trying something new.

They also work very closely with research teams. Often, they’re inspired by new research directions or things they know are coming, and they build ahead of time, whether that’s foundational infrastructure or new product experiences.

It’s overall a very creative process for them. No one has fully figured out the right way to supervise and steer agents yet, and the Codex app is just one expression of their thinking.

Their process also contains a lot of experimentation and ideation. Not everything ships, but people are encouraged and rewarded for exploring new ideas, and they only launch the very best ones.

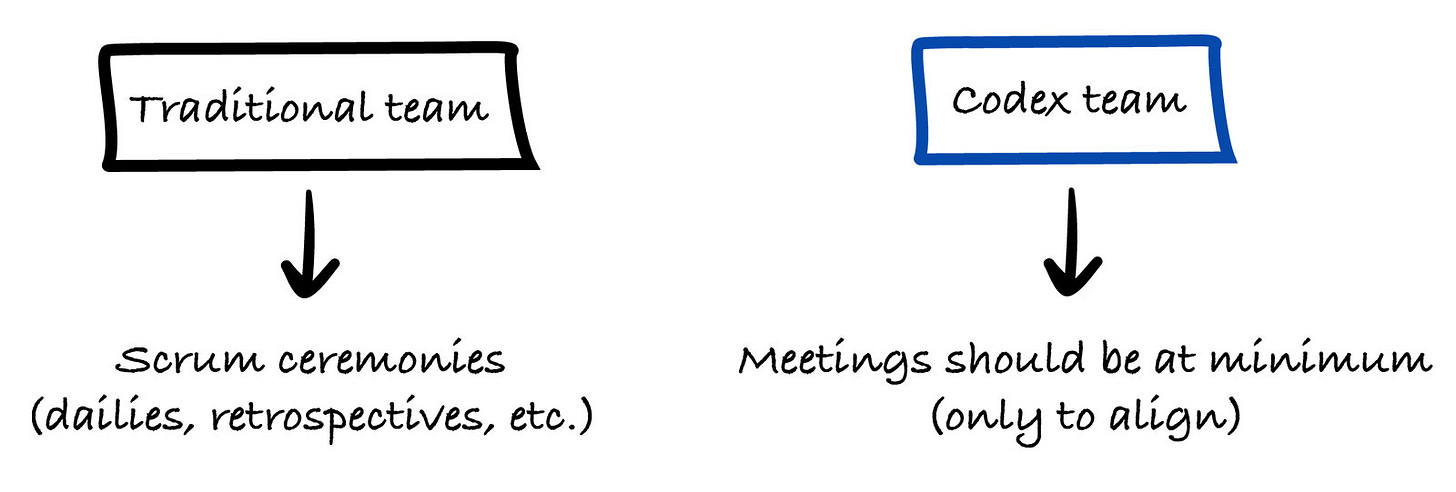

They keep things very lean and minimize meetings as much as possible

When meetings do happen, they’re usually spontaneous and driven by real needs. The office is set up to encourage in-person collaboration, and the leadership team makes itself extremely available so issues can be resolved in minutes or hours at worst.

They make decisions very quickly. The cost of making mistakes is much lower because Codex is always available. They can try things, observe what happens, and change course fast if needed.

As a result, they optimize heavily for speed and velocity. A lot of the processes that worked a few or even two years ago just don’t scale anymore, so they’ve essentially reinvented how they work.

The onboarding buddy for new engineers on the team is Codex

This is what I have already mentioned in the article: How to Build AI-Native Engineering Teams.

Thibault shared that Codex walks new hires through onboarding, sets up their entire computer, and helps them understand the codebase, projects, and existing features. It basically acts like a highly skilled engineering mentor.

From initial setup to being productive, most of the onboarding process is handled by the AI tool. As a result, onboarding is much faster than it used to be.

At OpenAI, they also have a strong culture of shipping on day one. It’s really important for every engineer to provide value as soon as possible.

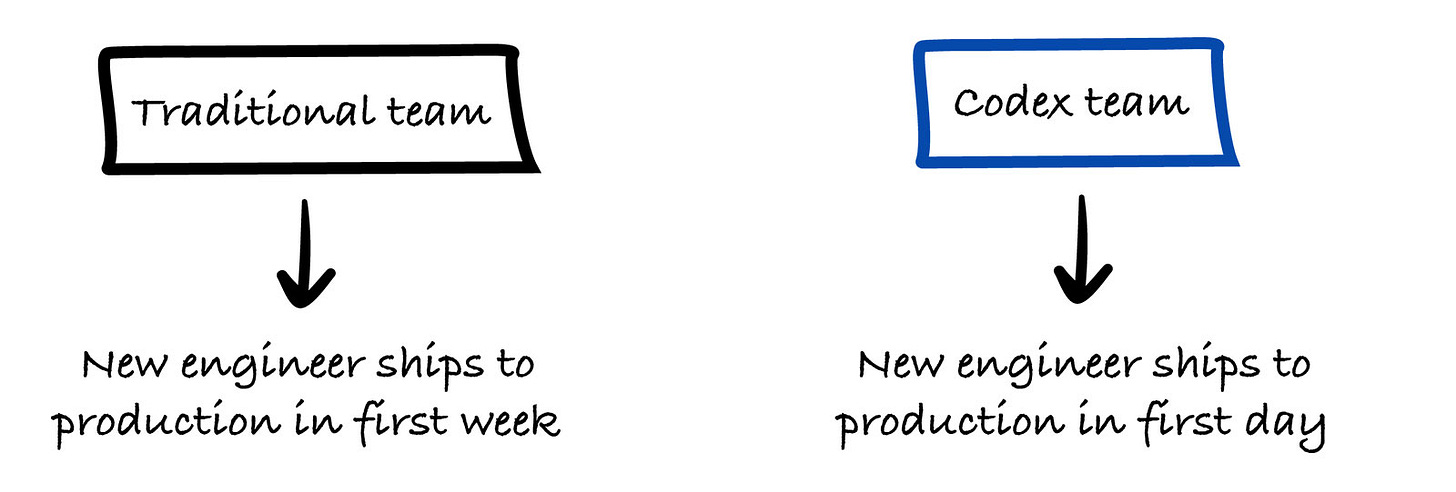

Normal traditional teams have the goal to ship code to production within the first week. In their team, that should be on the first day.

With that kind of culture and systems in place, new hires can arrive with no prior context, quickly understand the system, and ship meaningful features on their first day.

Now, let’s go to the part on how they leverage AI to be productive.